Then, we pass this function along with a set of values for each of the parameters of interest to the interactive function. First, we define a function that trains and plots a decision tree.

#JUPYTER GRAPHVIZ INSTALL#

With conda conda install -c conda-forge ipywidgetsįor this application, we will use the interactive function. With pip pip install ipywidgets jupyter nbextension enable -py widgetsnbextension

There are two options to install ipywidgets, through pip and conda. Jupyter widgets are interactive elements that allow us to render controls inside the notebook. Instead of plotting a tree each time we make a change, we can make use of Jupyter Widgets (ipywidgets) to build an interactive plot of our tree. To get a grasp of how changes in parameters affect the structure of the tree we could again visualize a tree at each stage. We usually assess the effect of these parameters by looking at accuracy metrics.

#JUPYTER GRAPHVIZ SERIES#

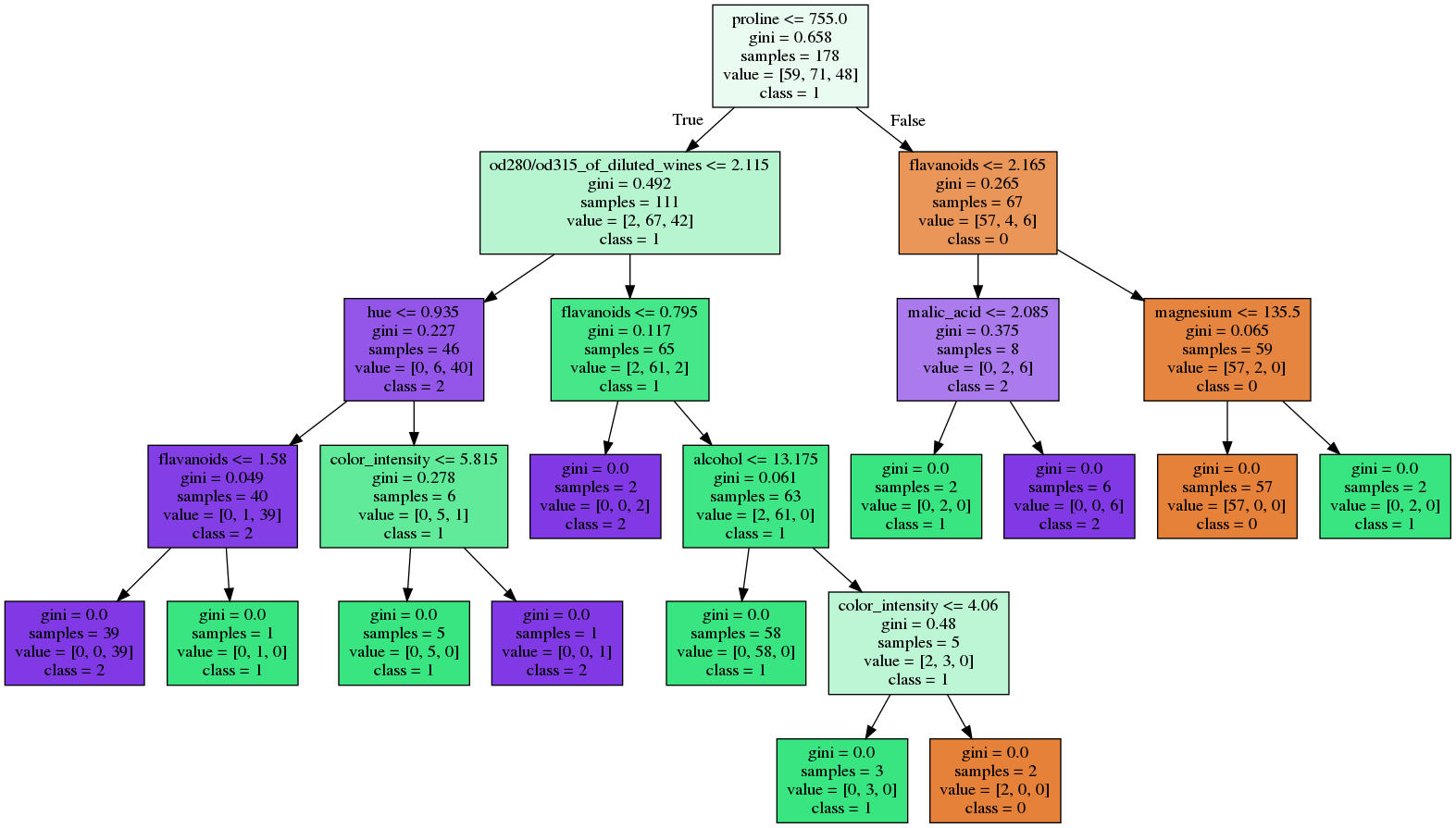

Other than pre-pruning parameters, a decision tree has a series of other parameters that we try to optimize whenever building a classification model. This model is less deep and thus less complex than the one we trained and plotted initially. Below, we plot a decision tree on the same data, this time setting max_depth = 3. Pre-pruning can be controlled through several parameters such as the maximum depth of the tree, the minimum number of samples required for a node to keep splitting and the minimum number of instances required for a leaf. Sklearn learn decision tree classifier implements only pre-pruning. Pre-pruning means restricting the depth of a tree prior to creation while post-pruning is removing non-informative nodes after the tree has been built. Two main approaches to prevent over-fitting are pre and post-pruning. Over fitted models will most likely not generalize well in “unseen” data. While easy to understand, decision trees tend to over-fit the data, by constructing complex models.

When filled option of export_graphviz is set to True each node gets colored according to the majority class. At the bottom we see the majority class of the node. Samples is the number of instances in the node, while the value array shows the distribution of these instances per class. In that case, there is no need for further split and this node is called a leaf. We say that a node is pure when all its samples belong to the same class. how homogeneous are the samples within the node. Gini refers to the Gini impurity, a measure of the impurity of the node, i.e. In the tree plot, each node contains the condition (if/else rule) that splits the data, along with a series of other metrics of the node. from ee import DecisionTreeClassifier, export_graphviz from sklearn import tree from sklearn.datasets import load_wine from IPython.display import SVG from graphviz import Source from IPython.display import display # load dataset data = load_wine() # feature matrix X = data.data # target vector y = data.target # class labels labels = data.feature_names # print dataset description print(data.DESCR) estimator = DecisionTreeClassifier() estimator.fit(X, y) graph = Source(tree.export_graphviz(estimator, out_file=None, feature_names=labels, class_names=, filled = True)) display(SVG(graph.pipe(format='svg'))) For this demonstration, we will use the sklearn wine data set. Using sklearn export_graphviz function we can display the tree within a Jupyter notebook. Decision trees are simple to interpret due to their structure and the ability we have to visualize the modeled tree. These classifiers build a sequence of simple if/else rules on the training data through which they predict the target value.

In this article, we will talk about decision tree classifiers and how we can dynamically visualize them. Decision Trees are broadly used supervised models for classification and regression tasks.